When the Association of Chartered Certified Accountants (ACCA) announced it would largely halt remote exams due to rising AI-driven cheating concerns, many saw it as proof that remote testing no longer works.

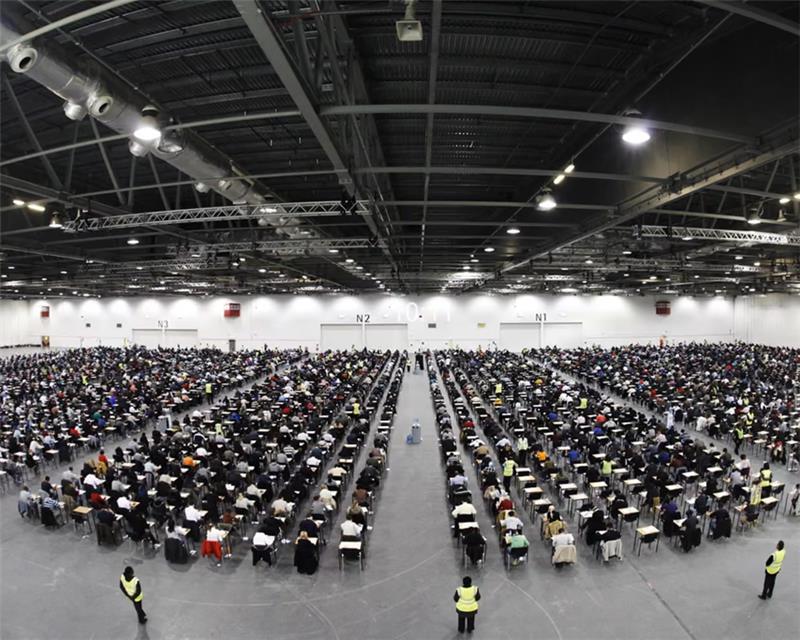

Close to 4,000 aspiring accountants sit for their ACCA exams at London’s Excel Center each session.

Source: https://www.theguardian.com/business/2025/dec/29/uk-accounting-remote-exams-ai-cheating-acca

That conclusion is understandable, but incomplete.

The real story is not that remote exams have failed.

The real story is that outdated remote proctoring models are no longer enough.

For years, many organizations relied on a narrow version of remote exam security:

A webcam

A browser lockdown tool

A human proctor watching a screen

A room scan at the start of the session

That may have worked in the pre-AI era.

It does not work in an era of:

Browser-based AI plugins

Secondary devices

Hidden earbuds

Screen overlays

Deepfakes

AI-generated responses

Item harvesting and answer sharing

The problem is not that remote exams are impossible to secure.

The problem is that many remote exam systems were never designed for the modern cheating landscape.

Remote Exam Security Was Built for Yesterday’s Threats

Many traditional remote proctoring solutions were designed around a simple assumption: If you watch the candidate closely enough, you can stop cheating.

That is no longer true.

Modern cheating rarely happens in plain sight.

Candidates can use:

Hidden phones

Off-screen devices

GPT-powered browser extensions

AI overlays

Silent prompting tools

Screen-sharing apps

Remote collaboration

The risk has expanded far beyond what a webcam alone can detect.

This is why organizations need to stop asking:

“Can remote proctoring stop cheating?”

And start asking:

“In an era of smarter cheating, how is your system actually preventing it?”

Cheating Does Not Start When the Exam Starts

One of the biggest misconceptions in the market is that cheating only happens during the exam session.

In reality, cheating often begins much earlier.

It can start with:

Weak item banks

Overexposed content

Reused forms

Predictable blueprints

Poor item rotation

Shared answer banks

Leaked questions on forums or the dark web

AI-generated practice responses that mirror operational items

That means organizations cannot rely on proctoring alone.

They need a layered integrity model that protects:

The content

The candidate

The device

The testing environment

The psychometric validity of the results

The Future of Secure Remote Exams Is Layered

The strongest assessment programs are no longer using a single security checkpoint.

They are using multiple layers of protection before, during, and after the exam.

Before the Exam

Modern assessment security begins long before the candidate logs in.

Organizations need:

AI-powered item generation with traceability

Item exposure monitoring

Enemy item detection

Honeypot content traps

Dynamic pool generation

Content monitoring across Web2, the dark web, and Web3 environments

Blueprint validation and psychometric review

During the Exam

Exam integrity during delivery now requires much more than webcam monitoring.

Organizations need:

Dual monitor detection

Screen mirroring and recording detection

GPT plugin and AI overlay detection

Voice biometrics

Facial recognition

Keystroke analysis

Environmental monitoring

After the Exam

The strongest organizations also investigate what happened after the exam session ends.

They review:

Candidate response times

Item exposure trends

Person-fit analysis

Item parameter drift

Differential item functioning

Suspicious score patterns

Pre-knowledge indicators

Behavioral anomalies

Content compromise signals

This is where psychometric intelligence becomes essential.

Because the goal is not just to detect suspicious moments.

The goal is to determine whether the score itself can be trusted.

In-Person Exams Are Not Immune to Cheating

Another common misconception is that moving back to test centers automatically solves the problem.

It does not.

In-person testing environments still face:

Proxy test takers

Hidden devices

Bathroom break question review

Paper theft

Question memorization

Organized impersonation rings

Human proctor inconsistency

Answer sharing

The difference is that many organizations feel more comfortable controlling physical location.

But physical testing is not inherently more secure.

It is simply a different risk model.

In fact, modern remote testing environments often provide more forensic evidence than physical test centers ever could.

Digital assessments can capture:

Screen activity

Device telemetry

Behavioral signals

Audio patterns

Identity checks

Environmental scans

Session logs

Candidate interactions

Real-time flags

That level of visibility is difficult to achieve in a traditional classroom or testing center.

The Future Is Not Remote Exams Versus In-Person Exams

The future is not about choosing between remote testing and physical testing.

The future is about choosing the right level of security for the right assessment.

Some exams may need:

AI-only monitoring

Record-and-review models

Hybrid delivery

Onsite testing

Secondary device monitoring

Device lockdown tools

Psychometric investigation

Organizations that continue using one-size-fits-all security models will struggle.

Organizations that adopt layered, adaptive, and data-driven integrity systems will be able to deliver secure exams anywhere.

Why ExamRoom.AI Is Different

Many remote proctoring vendors focus only on what happens during the exam.

ExamRoom.AI delivers a more comprehensive, end to end approach.

Rather than treating proctoring as a single event, ExamRoom.AI secures the entire assessment lifecycle.

Before the exam, ExamRoom.AI protects item banks, monitors content exposure, detects enemy items, uses honeypot techniques, supports dynamic pool generation, and monitors the web for leaked content.

During the exam, ExamRoom.AI combines AI proctoring, human oversight, ExamLock browser controls, Exam360 environmental monitoring, behavioral analysis, GPT extension detection, facial recognition, voice biometrics, and psychometric anomaly detection.

After the exam, ExamRoom.AI continues to evaluate item exposure, person-fit analysis, response time anomalies, pre-knowledge indicators, item parameter drift, differential item functioning, and suspicious scoring patterns.

This is what truly defines modern assessment security, an approach that goes beyond traditional methods to deliver a more comprehensive, adaptive, and resilient framework.

It is no longer enough to watch the candidate.

Institutions must protect the content, the device, the environment, the behavior, the score, and the integrity of the outcome.

That is why ExamRoom.AI positions itself as a Unified Assessment Platform™, not simply a remote proctoring vendor.

Final Thought

AI has changed cheating.

But it has not made remote exams obsolete.

It has raised the standard for what secure remote testing must be.

The institutions that succeed will not be the ones that abandon remote exams.

They will be the ones that move beyond webcam only proctoring and build assessment ecosystems designed for the realities of modern, AI driven cheating.

Because exam integrity is no longer about watching the candidate.

It’s about protecting the entire assessment lifecycle, from content creation to credential delivery.